The Internet of Things, or IoT, is vast, consisting of nearly 50 billion “things” by 2020 according to Philip Howard. The IoT is also nebulous. Defined as a network of physical objects or “things” embedded with electronics, software, sensors and connectivity, this IoT as we know it today includes devices as diverse as heart monitoring implants, biochip transponders on farm animals, automobiles with built-in sensors, refrigerators providing online status, bio-hazardous particulate sensors, centrally scheduled and monitored outdoor lighting, distributed net-energy meters, and many more. Plus who knows what kinds of things are on the drawing board.

Connecting all these things to the Internet is a certainty. Economics insure it. Publicly traded companies making data center hardware and software, delivering connectivity plumbing like fiber, providing cloud services, offering mobile services like smart phone connectivity, are all looking for that next hundred million in revenue offered by an emerging market of 50 billion things. Plus like those bulls in Pamplona, startups are running toward this bullring of opportunity too, hoping to create the killer app or uncover the dominant business model for all these things. How, though, will all these things get connected? Why wirelessly of course.

Cellular

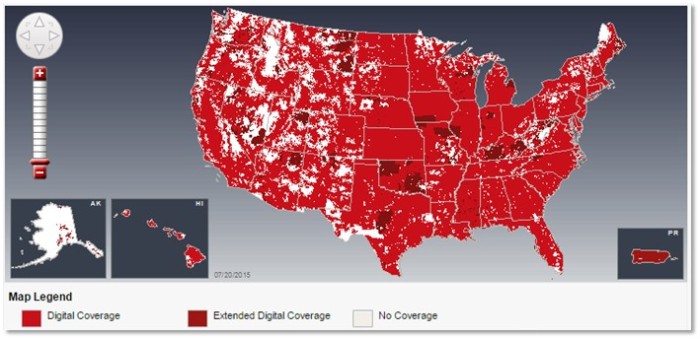

The edge of the Internet is hard to get to, because it is either remote or always moving or both. If it were easy, it would already be part of the Internet! Imagine an IoT application that utilizes RFID tags to track tools and equipment assigned to a truck and used by a field service worker. Depending upon the job and the day, this field service truck and worker may be in town where connectivity is easy, or way out of town at a remote, high-value asset like a pipeline pump station. Only a wireless connection works in both situations. One glimpse of a typical cellular provider’s mobile data coverage map and it’s easy to see that cellular coverage is prodigious. Ever increasing ARPU powering a never ending rollout of data connection speeds (… 2G, 3G, 4G/LTE …) has insured that cellular is very nearly everywhere. This fact lies in stark contrast to the many failures of Municipal Wi-Fi, doomed by technical, economic and business model shortcomings, foreshadowing a future bathed in cellular.

Cellular, however, is not the Internet per se because it does not operate using the Internet Protocol known as TCP/IP. Yet cellular routers and mobile applications with their cloud services have become quite adept at marshaling TCP/IP payloads across cellular networks, so cellular is perfectly capable of being used to extend the edge of the Internet as far and wide as cellular networks reach today, and tomorrow.

Spanning Networks

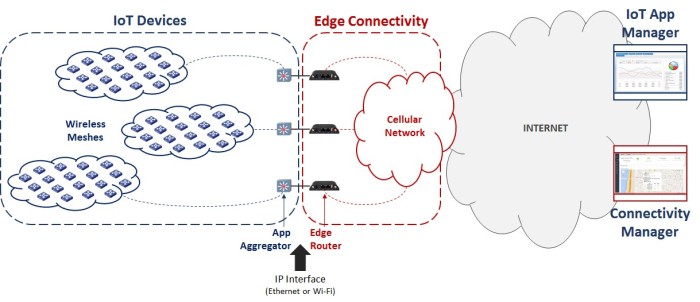

At the Internet’s edge, IoT application developers are hard at work building businesses on devices connected to the Internet via a spanning network. First, a spanning network extends the reach of the Internet using cellular, from a cell tower outward, as far as a cell tower can reach – the so-called last mile of connectivity. Then from the edge of a cell tower’s reach, a spanning network extends the Internet even further. Wired protocols like PLC for gas, water and power meters or RS-485 for fieldbus devices have been used in the past, but the ease and economics of wireless mesh networks like Zigbee and its many variants are rendering wired protocols obsolete. So more commonly, the last foot of connectivity is provided by wireless mesh protocols.

Each IoT application needs a spanning network. The nuts and bolts of this network are built from standard, off-the-shelf components like cellular routers and RF radios, but the performance characteristics of a spanning network are very application specific. How big are the payloads? How far and wide must payloads be distributed and through what routes? With what frequency must payloads be uploaded and downloaded? These application details along with the economics involved in operating a spanning network at scale drive the success of an IoT application, perhaps even more than its functionality.

Designing, testing and then operationalizing a spanning network at scale is nontrivial. Delicately balancing throughput requirements against cellular data plan requirements and costs is just one of the key drivers, but one that has an oversized effect on operational costs. How many wireless mesh nodes worth of payloads can a single cellular edge router manage? How many cellular edge routers are required to cover an IoT application’s service area? How big a machine-to-machine data plan does each cellular edge router need and what will the costs be in full operation?

Early investigations on these topics during the IoT application design phase can be dramatically simplified by a cellular edge router with the right functionality. The same is true during testing, operational scaling and even maintenance over a service agreement’s term. A cellular edge router designed for IoT application developers would support these features and functions:

- Application Agnostic

- Cloud Connected

- Virtualization

- Wireless Mesh NAT and DHCP

- Bandwidth Modeling

- Energy Management

- Differential Monitoring

Application Agnostic

Attempting to be the Ginsu Knife of cellular edge routers for IoT developers is folly. There is no way to predict how a customer in a particular vertical market segment will wish to integrate IoT devices with a spanning network, and even if you could, satisfying such a disparate set would bloat a cellular edge router and render performance for any specific customer lame. Companies have struggled for years trying to be just such a solution.

Instead, the paradigm needs to change. The interface between a specific IoT application and the cellular edge router becomes Ethernet only, with the app developer encapsulating their app logic into an Ethernet push device like Synapse Wireless’ SNAP Connect E10 and E20 or EKM Metering’s EKM Push. This push device has intimate knowledge of the payloads being exchanged between the wireless mesh and the cloud as well as message semantics, recovery strategies and the like, which frees the cellular edge router to focus solely on optimized TCP/IP payload exchange.

Cloud Connected

Public IP addresses are too valuable a resource to dole out willy-nilly so instead, carriers dole out private IP addresses to cellular edge routers, which require a VPN connection into the carrier’s network for direct access to a cellular edge router’s configuration. These private IP addresses are used to configure, troubleshoot and optimize the performance of a cellular edge router. For initial configuration a VPN client and configuration may not be necessary because the router can be connected locally to a computer via an Ethernet cable, but once the router heads into the R&D lab or out into the wild it’s a different story. VPN client licensing and configuration across multiple roles within an organization is an unwieldy proposition at best, and one that gets disproportionately worse as the number of routers grows. Edge router support, troubleshooting and optimizations over time to maintain an IoT application’s spanning network must be simple and low-cost.

The solution is for a cellular edge router targeting IoT application developers to be “cloud connected”. Instead of connecting directly to an edge router’s configuration webpage through a VPN pipe, the IoT app developer securely logs into a cloud service provided by the edge router manufacturer in order to manage the edge router’s configuration initially as well as over time. Once provisioned into the carrier’s network, the edge router receives all of its configuration parameters from this manufacturer cloud service while pushing router monitoring and status information to the cloud service as well. No VPN client is required. No direct connection to the edge router’s webpage is needed either. The IoT app developer can then manage roles, authentication and authorization to specific edge routers in a way that is consistent with other managed devices. What’s more, a cloud service enables a stickier, longer-lasting relationship between the edge router manufacturer and the IoT app developer that improves monetization over time and can help fund cloud service development for the cellular edge router manufacturer.

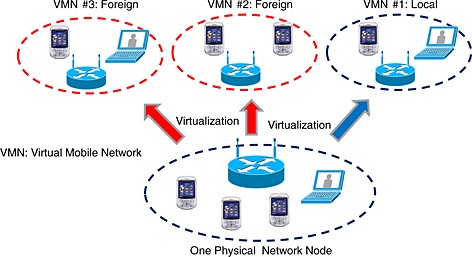

Virtualization

Virtualization has been an economic boon for corporate IT and datacenters because of its dramatic improvement in utilization. Shared connectivity was the enabling technology, lower operating costs the benefit.

A similar benefit occurs when all of the edge routers in a spanning network share connectivity as they do when cloud connected. Cellular data plan utilization improves. In fact, the IoT application developer can optimize this part of their business, which can have a huge impact on the bottom line when considered across many customer installations.

Additionally, spanning network reliability improves. Each cellular edge router’s configuration for a particular IoT application resides in the cloud, simplifying failover and reducing downtime. A cellular edge router can be re-flashed in minutes. Or the router can be swapped in the field by lower cost field resources that know nothing about the IoT application, and then flashed and spun up remotely by the application developer. A spanning network can even be designed with overlapping meshes and overhead bandwidth so that a single cellular edge router can temporarily backhaul multiple meshes should an edge router fail in the field. This temporary releveling can be done remotely to preserve uptime at the expense of throughput, but then returned once the edge router gets replaced, all without rolling a truck.

Wireless Mesh NAT and DHCP

Low power, low throughput wireless meshes are the norm for IoT applications because the operating expenses are more favorable. Unfortunately, these meshes do not use the Internet Protocol. Neither IP addresses nor protocols like DHCP are supported, but a cellular edge router designed for IoT application developers could deliver these capabilities. Using IPv6, a cellular edge router could individually address each node in an 802.15.4 mesh, and provide NAT as well as DHCP to simplify management from the cloud. These edge router features would further simplify the application design process for IoT developers, adding even more value.

Bandwidth Modeling

Many, possibly even most, IoT applications will be delivered as financed services so that the “customer” pays over time, often in the context of a performance contract. Financing adds a time dimension to the economics of a solution, and heightens the importance of operational expenses like cellular backhaul data plans to the overall value proposition of an IoT application. Initially identifying the optimal data plans becomes crucial. Maintaining the optimal data plans over time as carriers change plans also becomes essential.

A cellular edge router will not know the dollars per megabyte for its backhaul pipe, but it will know the megabytes moved through the gateway per month, which obviously drives data plan economics. Early on in the design of an IoT application, the megabytes per month for each class of IoT device must be determined and then used to model the throughput of each router in the IoT application’s spanning network. Making this analysis and optimization easy and then simplifying verification in the lab as well as out in the wild during a performance contract has huge value to the IoT application developer.

A set of bandwidth modeling steps might unfold like this:

- Connect an IoT device to the router using a wireless mesh and enable real time throughput monitoring, then run the device through its usage scenarios, keeping track of payload size and frequency (i.e., data usage) per scenario.

- Assemble a collection of IoT devices sharing a single wireless mesh along with a single router into a scaled down spanning network, then put the IoT devices through their combined usage scenarios to determine the maximum number of IoT devices a single router can effectively backhaul.

- Operate the scaled down spanning network beyond the router’s capacity to understand throughput failure modes and how to set alert thresholds for managing to a performance contract.

- Expand to a multi-router, multi-wireless mesh spanning network to fine tune the IoT application’s operational parameters and alerts, all the while using the router’s bandwidth modeling tools as the design feedback loop.

Throughout, easily accessible bandwidth information provided by the cellular edge router enables the IoT developer to economically deliver optimized spanning networks for the IoT application.

Energy Management

Remote IoT applications may be battery powered, so understanding the energy characteristics of a cellular edge router across a broad set of scenarios, as well as being able to affect the router’s energy profile programmatically and in real time, is crucial. However, even when the IoT application is not remote, energy matters. The cost of energy comes out of the IoT application developer’s top line revenue when providing a service. Nowhere is this truer than in IoT applications targeting the energy sector, where every kilowatt generated or saved gets monetized.

The solution involves insuring the IoT application developer has access to rich energy usage data for the cellular edge router as well as a programmatic way to affect the router’s energy profile over time. Like bandwidth data, energy usage data provided by the router helps during the design and test phase and then rolls into differential monitoring to help the IoT application developer craft, then meet, a customer performance contract.

Differential Monitoring

A typical wide area IoT application requires a spanning network with numerous cellular edge routers for backhaul. A successful IoT application developer will have many customers, each with an instance of a wide area IoT application spanning network. Therefore, a successful IoT application developer must manage a large number of routers. Proactively assessing each router’s ongoing performance is untenable at scale, even if doing so can be accomplished using a cloud service. Instead, differential monitoring hosted at the cellular edge router’s cloud service is the key. Granular, side-by-side, near real time graphs of many routers performing similar functions is the simplest way to identify anomalies at scale. Once identified, troubleshooting can begin.

Exceptions to normal behavior, surfaced as alerts, are another form of differential monitoring. Performance thresholds that trigger alerts can be configured to proactively manage a spanning network’s performance to a service level agreement, a competitive advantage in the IoT space. Facilitating the performance analysis of an IoT application’s spanning network in order to craft the terms and conditions of a service level agreement, and then creating the associated alerts, enables the IoT developer to over deliver in the eyes of the customer.

Three Examples

A cellular edge router with these features could be used to design IoT applications where the spanning network provides stateless connectivity only as well as where the spanning network is state-full and provides unique capabilities to the application. A few examples should help illustrate.

Remember the tool tracking and utilization service for truck fleets from above? This application is primarily a monitoring application, so no algorithms need to reside and run at the edge and the spanning network simply passes data from RFID tags on tools through the truck’s cellular edge router and up to the cloud. No app aggregator would be needed in the wireless mesh because no state or semantics reside there. However, IPv6 addressing and DHCP from the wireless mesh all the way through to the cloud would be very beneficial and easily delivered by this cellular edge router.

Similar to the tool tracking example, imagine a service for tracking a department’s fleet of police cars. This application is also primarily a monitoring application without algorithms at the edge, but there are additional store and forward semantics that would improve data collection and bandwidth utilization. Desired data might include location of the vehicle, of course, but also identifiers for the officers in or near the vehicle as well as gun access and stow events. An application aggregator, in this case, would be needed in the wireless mesh and would include a GPS chip for location, an RFID receiver for officer and gun identification plus a holster detector. These monitoring payloads would be packaged up by the aggregator and then passed through the Ethernet interface of the cellular edge router to the cloud. The cellular edge router would know nothing of the application beyond throughput requirements.

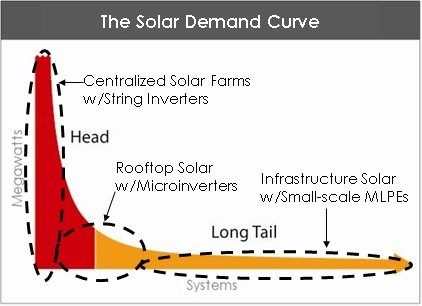

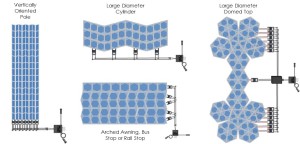

At the other end of the spectrum, imagine a mesh of off-grid solar lights that coordinate a shared dusk and dawn demarcation for a cloud-based lighting schedule. Each solar light has a solar collector and can detect dawn and dusk, but these detections will vary from light to light causing a rolling turn on across the entire mesh. For a single on and off across all lights, a coordinated on/off can be determined by majority. Once a majority of the lights within the mesh detect dusk, for example, all lights turn on simultaneously. Ditto for dawn, except that the lights turn off. These semantics are handled within the mesh rather than in the cloud to eliminate the data throughput requirements and connectivity latency. Here an application aggregator would be required, with the algorithm to determine “majority” and then broadcast the turn on or turn off message to the mesh. This aggregator would include a wireless mesh radio so that it can communicate with all the solar light nodes in the mesh, as well as a processor to execute the coordinated on/off algorithm and an Ethernet chip for communications with the cellular edge router. Though more functionality resides at the edge in this example, the cellular edge router still knows nothing about these semantics because they are encapsulated in the application aggregator.

A Missed Opportunity

Nobody seems to be targeting IoT application developers and building this cellular edge router, which seems like a missed opportunity. Fifty billion reasons seems like plenty of motivation, I wonder where the takers are.